Build a Canvas Data Warehouse on AWS in 30 minutes!

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Introduction

Canvas Data provides a wealth of information that can be used in many interesting ways, but there are a few hurdles that can make it hard to even get started:

- The Canvas Data API uses a different authentication mechanism than the one that you're probably already used to using with the Canvas API.

- Data is provided in compressed tab-delimited files. To be useful, they typically need to be loaded into some kind of database.

- For larger tables, data is split across multiple files, each of which must be downloaded and loaded into your database.

- The size of the data can be unwieldy for use with locally-running tools such as Excel.

- Maintaining a local database and keeping up with schema changes can be tedious.

This tutorial will show you how to build a data warehouse for your Canvas Data using Amazon Web Services. Besides solving all of the problems above, this cloud-based solution has several advantages compared to maintaining a local database:

- You can easily keep your warehouse up to date by scheduling the synchronization process to run daily.

- You can store large amounts of data very cheaply.

- There are no machines to maintain, operating systems to patch, or software to upgrade.

- You can easily share your Canvas Data Warehouse with colleagues and collaborators.

Before we get started

Before you begin this tutorial, you should make sure that you've got an AWS account available, and that you have administrator access. You'll also need an API key and secret for the Canvas Data API.

Experience with relational databases and writing SQL will be necessary in order to query your data. Experience with AWS and the AWS console will be helpful.

Be aware that you'll be creating AWS resources in your account that are not free, but the total cost to run this data warehouse should be under about $10/month (based on my current snapshot size of 380GB). There's also a cost associated with the queries that you run against this data, but typical queries will only cost pennies.

All of the code used in this tutorial can be found in GitHub:

GitHub - Harvard-University-iCommons/canvas-data-aws: Build a Canvas Data warehouse on AWS

AWS services we'll use

We'll use several different AWS services to build the warehouse:

- S3: we'll store all of the raw data files in an S3 bucket. Since S3 buckets are unlimited in size and extremely durable, we won't need to worry about running out of space or having a hard drive fail.

- Lambda: we'll use serverless Lambda functions to synchronize files to the S3 bucket. Since we can launch hundreds or even thousands of Lambda functions in parallel, downloading all of our files is very fast.

- SNS: we'll use the Simple Notification Service to let us know when the synchronization process runs.

- Glue: we'll create a data catalog that describes the contents of our raw files. This creates a "virtual database" of tables and columns.

- Athena: we'll use this analytics tool along with the Glue data catalog to query the data files directly without having to load them into a database first

- CloudFormation: we'll use AWS' infrastructure automation service to set up all of the pieces above in a few easy steps!

Let's build a warehouse!

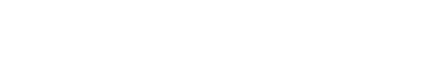

- Log into the AWS console and access the CloudFormation service.

- Click on the Create Stack button

- On the next screen, leave the Template is ready and Amazon S3 URL options selected. Below, Enter this S3 URL:

https://huit-at-public-build-artifacts.s3.amazonaws.com/canvas-data-aws/canvas_data_aws.yaml

Click Next. - On the stack details screen, first enter a name for this stack. Something like "canvas-data-warehouse" is fine. Enter your Canvas Data API key and secret in the fields provided. Enter your email address (so that you can receive updates when the synchronization process runs). You can leave the default values for the other parameters. Click Next.

- On the stack options screen, leave all of the default values and click Next.

- On the review screen, scroll to the bottom and check the box to acknowledge that the template will create IAM resources (roles, in this case). Click the Create stack button, and watch as the process begins!

It'll take several minutes for all of the resources defined in the CloudFormation template to be created. You can follow the progress on the Events tab. Once the stack is complete, check your email -- you should have received a message from SNS asking you to confirm your subscription. Click on the link in the email and you'll be all set to receive updates from the data-synchronization process.

Now we're ready to load some data!

Loading data into the warehouse

Instructure's documentation for the Canvas Data API describes an algorithm for maintaining a snapshot of your current data:

- Make a request to the "sync" API endpoint, and for every file returned:

- If the filename has been downloaded previously, do not download it

- If the filename has not yet been downloaded, download it

- After all files have been processed:

- Delete any local file that isn't in the list of files from the API

The CloudFormation stack that you just created includes an implementation of this algorithm using Lambda functions. A scheduled job will run the synchronization process every day at 10am UTC, but right now we don't want to wait -- let's manually kick off the synchronization process and watch the initial set of data get loaded into our warehouse.

To do that, we just need to manually invoke the sync-canvas-data-files function. Back in the AWS console, access the Lambda service. You'll see the two functions that are used by our warehouse listed -- click on the sync-canvas-data-files function.

On this screen you can see the details about the Lambda function. We can use the AWS Lambda Console's test feature to invoke the function. Click on the Configure test events button, enter a name for your test event (like "manual"), and click Create. Now click on the Test button, and your Lambda function will be executed. The console will show an indication that the function is running, and when it's complete you'll see the results. You'll also receive the results in your email box.

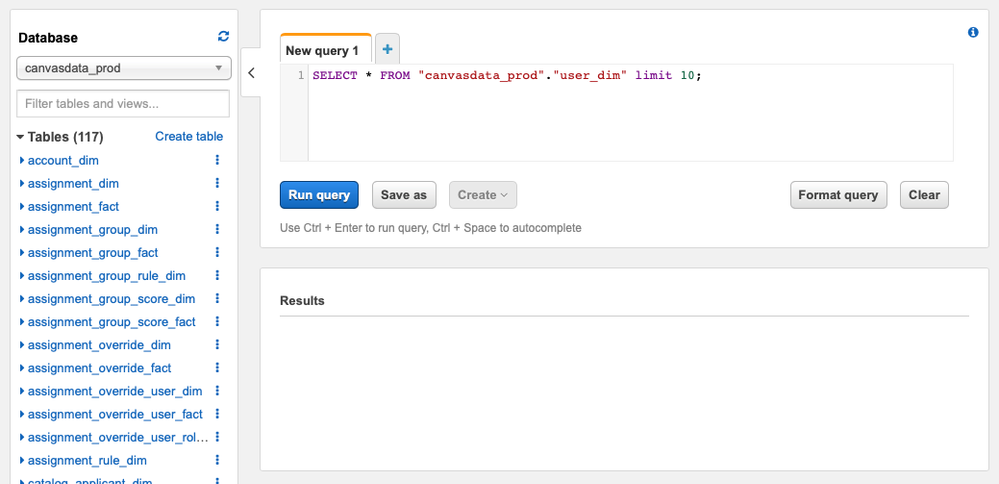

Querying your data

When the Lambda function above ran, in addition to downloading all of the raw data files, it created tables in our Glue data catalog making them queryable in AWS Athena. In the AWS console, navigate to the Athena service. You should see something similar to the screenshot below:

You can now write SQL to query your data just as if it had been loaded into a relational database. You'll need to understand the schema, and Instructure provides documentation explaining what each table contains: https://portal.inshosteddata.com/docs

Some example queries:

- Get the number of courses in each workflow state:

SELECT workflow_state, count(*) FROM course_dim GROUP BY workflow_state;

- Get the average number of published assignments per course in your active courses:

SELECT AVG(assignments) FROM (SELECT COUNT(*) AS assignments

FROM course_dim c, assignment_dim a

WHERE c.id = a.course_id

AND c.workflow_state = 'available'

AND a.workflow_state = 'published'

GROUP BY c.id);

Cleaning up

If you don't want to keep your data warehouse, cleaning up is easy: just delete the "raw_files" folder from your S3 bucket, and then delete the stack in the CloudFormation console. All of the resources that were created will be removed, and you'll incur no further costs.

Good luck, and please let me know if you run into any trouble with any of the steps above!

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.