Alas, I've found that Canvas' priorities don't always include making it easier for us to work with, but instead their APIs often return the information in the way that they need it to work with their Apps. There is no place in their apps that need to look up all of the assignments that are associated with a rubric, so they haven't exposed it. The demand for it just isn't big enough and there is a work around as you mentioned. Depending on their database keys, it may be an expensive lookup using a non-keyed field, and they try to make API calls quick and responsive.

In my work with the API, I've found many places where multiple API calls could be combined into a single one if just [insert needed piece of data] was included.

In some ways, it reminds me of a database. The API is kind of like a normalized database where each table contains a part of the picture but you may need to join several together to get the complete picture. Canvas Data on the other hand, uses a star schema, which is denormalized, and tries to anticipate all of the information that you might need in one place (or with no more than one join) and so information is duplicated in multiple places. Unfortunately, that means with the API that you may need to make multiple calls to get what you want and with Canvas Data you get a lot of stuff that you may not need.

I'm going to admit I don't fully follow this discussion because there's lots of references to API calls and then pronouns that follow and I'm not always sure what the antecedent is. So, forgive me if I just repeat everything that's already been said as I try to walk myself through it.

When you request an assignment (multiple ways to do this)

GET /api/v1/courses/1537564/assignments/7175288

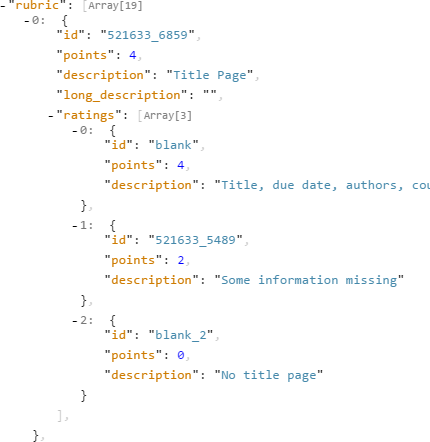

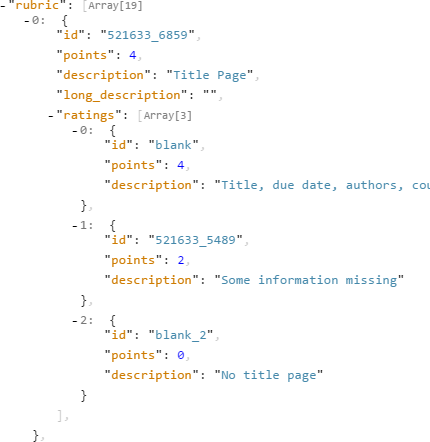

You get a rubric object returned. This particular one had 19 criteria, I'm only showing the first.

That top level ID is a context code. 521633_6859 is composed of a rubric ID and a criterion ID, separated by an underscore.

Based on that, I can go to the Rubrics API and fetch the rubric information. I do need to know where the rubric came from for this part. Was it an account rubric or a course rubric? I did look into that earlier, and it might be that it copies the account rubric into the course, I'm not sure. Anyway, that's probably not the stumbling point here.

GET /api/v1/courses/1537564/rubrics/521633?include=peer_assessments&style=full

This one might take a while as all of peer reviews are returned at once rather than being paginated.

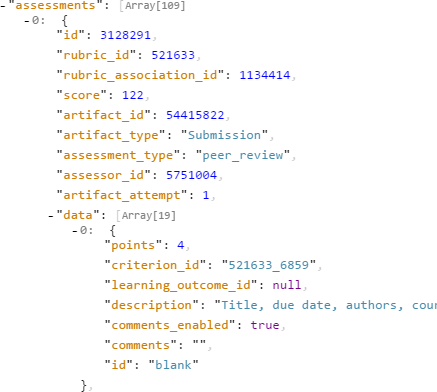

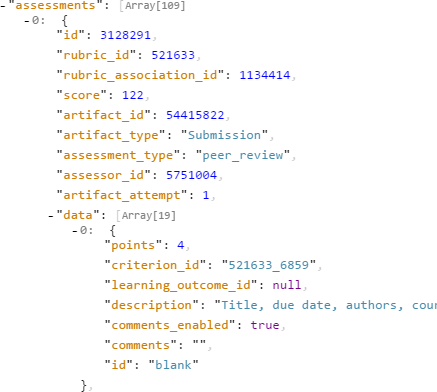

This is the top of the first assessment object. There are 109 of them in my data because there were 109 peer reviews.

Now let's look at the first assessment.

The artifact_type is "Submission", which means that the artifact_id is a submission_id. If the artifact_type was "Assignment", then the artifact_id would be an assignment. Notice that the Peer Reviews API allows for both types. In this case, it only makes sense to be tied to a submission, otherwise you would have no idea who the peer review was reviewing.

The assessor_id is the user ID for the person who completed the assessment.

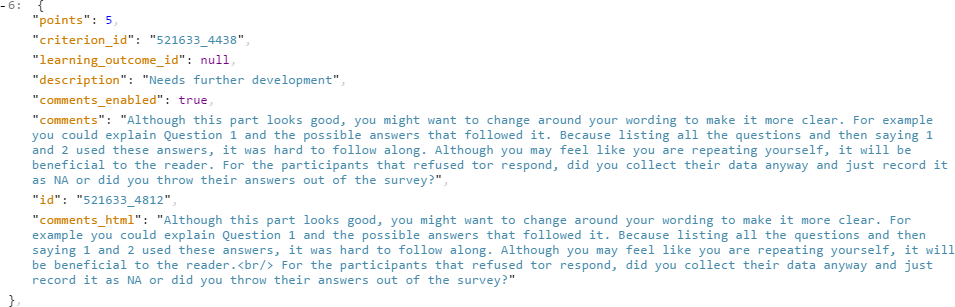

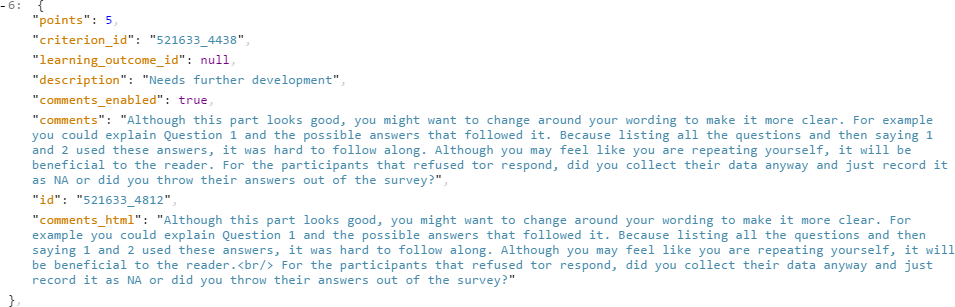

The data object contains the results for each of the 19 items in the rubric. On this first one, 4 points were awarded and no comments were left. Here's an example of what it looks like when comments are left.

The data is incomplete, but it may be or may not be enough. If you just want to look at what people said to review their reviews for assigning the reviewer a grade, you may not need to know who where reviewing. If you're pulling all the results together for archival purposes, you would want to know that.

The peer reviews API can pull in the other information.

/api/v1/courses/1537564/assignments/7175288/peer_reviews?include[]=user&include[]=submission_comments

This returns an unpaginated list of reviews.

As you said, you need to match the artifact_type and artifact_id from the rubric with the asset_type and asset_id from the peer reviews.

Interestingly, the submission_comments are for the user's assessment and not the combination of user and assessor. The person leaving the submission comments in the example is not the assessor. Furthermore, they are repeated if the user received more than one peer review. Watch out that you don't duplicate the comments.

So yes, there is some cross-referencing, but you had to know the assignment ID at the very beginning to get the codes to get the rubric. So it doesn't seem like such a big deal that the assignment isn't returned with the data since you already know it or you wouldn't have gotten this far. It's extra fluff (sometimes fluff is good) since it's not tied at an assignment, it's tied to a submission. The use of (artifact|asset)_(type|id) allows them to specify the key to the information without having to include extra information, but you should match off of both the type and the id to be safe.

But here's my question. I didn't need the rubric_association_id for anything. What is it and why is it important that it be easier to get if you don't use it? Or maybe I'm just missing the picture on it? The documentation in the API is missing a description for that line. It's not the assignment ID the rubric belongs to, since rubrics don't belong to assignments. It's not the submission ID. It's the same for every person using the rubric for an assignment (this may not be true if the rubric was changed in the middle???). It's kind of like Canvas is providing us a single ID for all of the rubrics associated with a single assignment (I don't have multiple assignments to test this with), but otherwise, it's not of use to us because it's not available anywhere else -- at least not yet? I'm not even sure what's a question and what's a statement here .!?