Having moved off of Redshift this summer, I just got my hands on a SQL Server instance to import all of our Data files. I'm more comfortable with MySQL so I had a little learning curve getting things set up and imported. I saved the Requests table for last since I could work on other visualizations while it was importing.

Our uncompressed Requests text file is 286GB and has been importing for the last 7 days. We are up to 46.9M rows (and counting at the time I write this) and I have no idea how much further we have to go.

Imagine the feeling when, yesterday at 6 days in, I realized that I messed something up and the ID field is importing NULL for every record. I'm letting it run to see how many rows I'm dealing with but ultimately will have to re-import it all over again.

What super awesome valuable reports you guys have gotten out of the requests table?

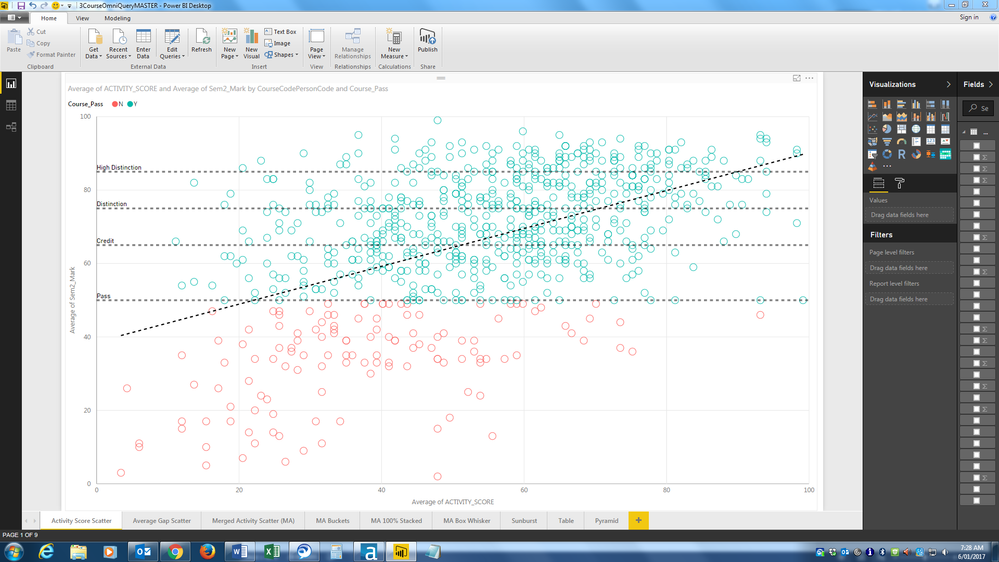

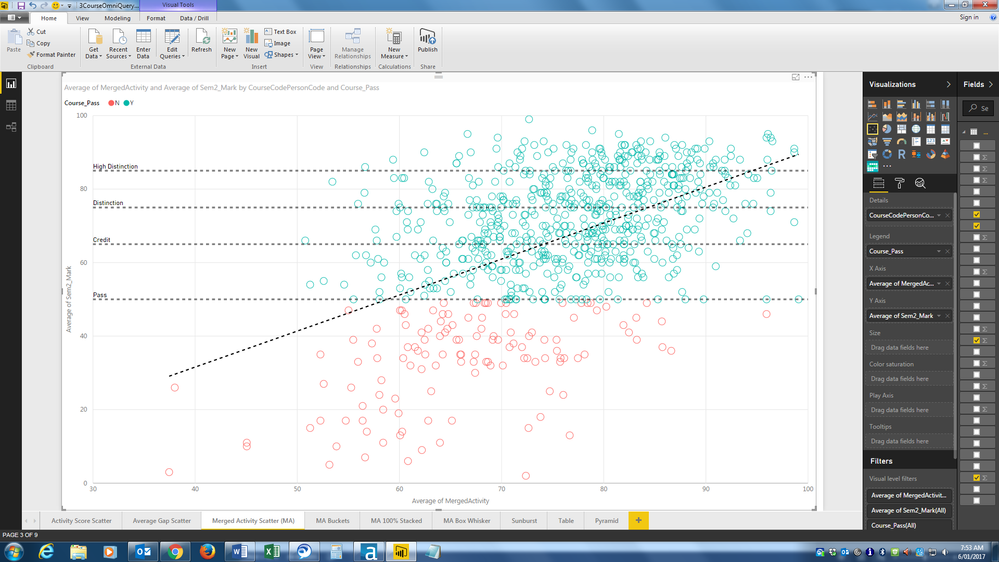

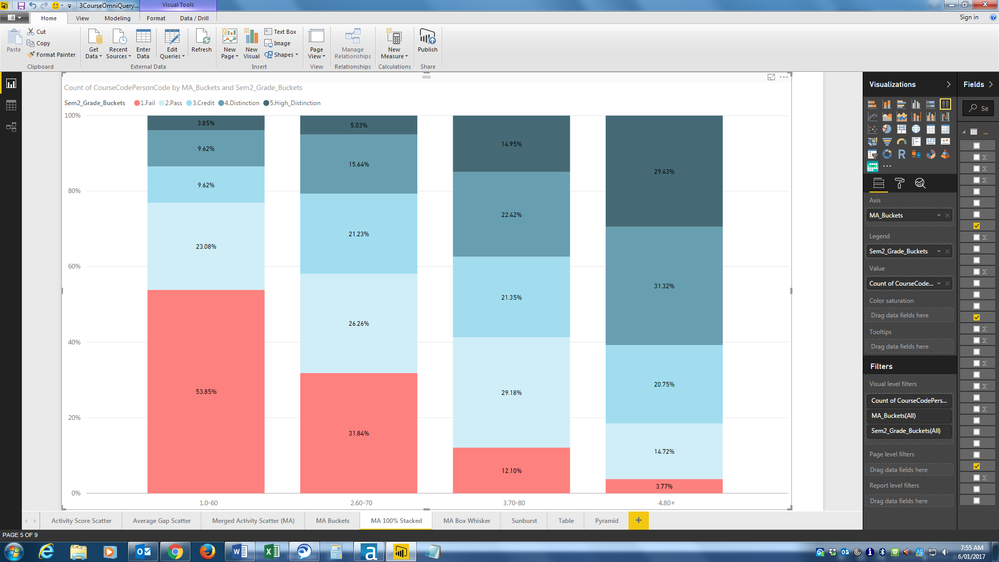

I've been able to create some nice (first pass) PowerBI reports to profile Canvas adoption using the other tables. Between import fatigue and a mild case of data-blindness, I'm wondering if I should bother with the requests file at all. Querying that thing at all is going to be a resource hog in and of itself, not to mention periodically updating it.

All ideas, advice, and condolences welcome.